Maybe I’m late to the party. Maybe I missed the bus. But when I stumbled across this excellent mini-course, I couldn’t resist building my own “OpenClaw Assistant.”

This post is a journal of what I set out to do, what I actually did, how it went — and where I ended up.

What I set out to do with OpenClaw?

My goal is to set up OpenClaw securely and build an “always-on assistant” I can reach through web chat and Telegram.

Oh, and the most important part: the whole experiment has to stay within my $30 budget.

What I actually did?

I used this mini-course as my baseline and starting point.

Here’s what I procured:

- Hostinger VPS account (~$15 for a one-month subscription)

- OpenClaw setup on the VPS (included in the cost — follow this guide)

- Anthropic Claude API credits (~$15)

The course is neatly broken into bite-sized steps, and here are the ones I chose to follow:

- Install and secure OpenClaw so that only you and your authorized apps can access your instance (course link)

- Give your OpenClaw assistant a personality, so it can recognize your style and personalize every interaction (course link)

First, I named my assistant — he will hereafter be known as “Garaka”

The personality is then shaped through 4 key files:

- SOUL.md – define agent identity, his values, way he communicates, hard limits

- AGENT.md – operating manual, checklist, rules the agent should follow for memory management and external content

- USER.md – briefing document, what agent needs to know about you as a person, distinct from his own identity

- MEMORY.md – memory system, daily logs and curated long-term store. Grows over time and improves

Before going further, take time to understand each of these files deeply — this step is crucial (course link)

- Connect “Garaka” to a channel so you can communicate with him beyond the web chat (course link)

A Telegram bot is the fastest way to get started, and its APIs are quite mature

- Enable the “always-on” part so you can schedule tasks, reminders, and more as cron jobs (course link)

In my case, I have Garaka send me a daily reflection reminder every evening at 9:30 PM.

- Give Garaka skills so he has clear instructions to accomplish specific tasks (course link)

- OpenClaw comes with a set of pre-defined skills

- More skills are available on ClawHub and are quite straightforward to install

- I gave him two skills — document-summary and quick-note — both handy and exactly what they sound like!

- Give Garaka access to web search via the Brave API so he can research topics and deliver richer, more informed responses (course link)

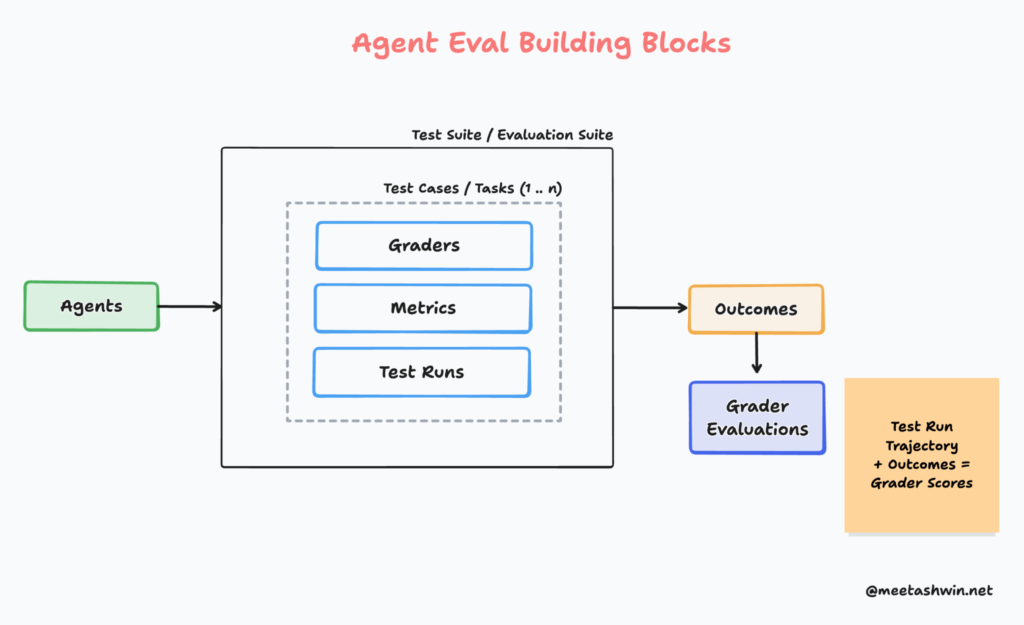

- Create sub-agents so Garaka can delegate tasks to specialist agents (course link)

- Sub-agents are ephemeral workers — they operate in their own context, can have their own styles, and hand their outputs back to the main agent (the orchestrator)

- This is a clean way to separate responsibilities and enable parallel execution

- I created a “writer” sub-agent that specializes in researching a topic, drafting content, and passing it to the main agent for review and approval

I chose not to enable email access for now — I want to get more comfortable with Garaka before handing him the keys to my inbox. If you’d like to go further, this course link walks you through giving your assistant access to emails.

How it went?

Overall, the entire setup took me about 3 hours. Here are a few observations along the way.

- OpenClaw setup stalled and aborted a couple of times. I had to delete and recreate the Docker image before things ran smoothly

- Expect significant model (LLM) usage during installation. I switched to a lower-tier model (Haiku 4.5) to keep costs down and stay within budget

- ⚠️ Watch out: Don’t make the mistake of selecting an OS when setting up your VPS. You must choose OpenClaw right from the start — otherwise you’ll need to configure it manually and lose access to the templated flow

Where I ended up with OpenClaw?

Here is the outcome after the setup and configuration.

Working web interface to OpenClaw and chat interface to Garaka

Garaka rephrasing his working style after initial configuration

Telegram bot interface to Garaka

Daily scheduled job to send me a reflection reminder

Skills enabled for Garaka

To conclude…

- Setting up a personalized agent in a controlled environment was a genuinely enriching experience — and the possibilities feel endless!

- The OpenClaw Mini-course by Aishwarya is a lifesaver for anyone who doesn’t want to get lost in a sea of tutorials or wrestle with OpenClaw’s somewhat unintuitive interface as a beginner

- Having a team of agents that learns your style, improves with every interaction, and integrates with your tools is a meaningful upgrade over plain LLM interactions!

Have you gone through this journey yourself? If so, I’d love to hear your thoughts and compare notes!